Six AI capabilities, multi-provider routing, and a strict human-in-the-loop principle — how an open-source tool is rethinking compliance documentation.

A validation specialist at a mid-size pharmaceutical company spends, on average, 30-40% of their working time writing documentation. Test cases derived from requirements. Risk assessments for every system component. Gap analyses against regulatory standards. Corrective action reports for failed tests. Executive summaries for management review. Audit preparation packages.

The work is repetitive but high-stakes. A poorly written test case can miss a critical defect. A risk assessment that underestimates severity can lead to patient harm. A gap analysis that overlooks a regulatory clause can result in a warning letter from the FDA or a non-conformity finding from a notified body.

QAtrial’s AI compliance co-pilot does not replace the human judgment that these tasks require. What it does is eliminate the blank-page problem. It generates first drafts that are context-aware — tuned to the specific country, industry vertical, applicable standards, and risk taxonomy of the project — and then puts every decision in the hands of the quality professional.

The Six AI Capabilities

1. Test Case Generation from Requirements

This is the most immediately useful feature. Point the AI at any requirement, and it generates 4-6 test cases, each with a title, description, numbered test steps, expected results, and a reference to the applicable regulatory standard.

The prompts are not generic. They include the project’s country (which determines the regulatory authority), vertical (which determines the applicable GxP standards), and the requirement’s own metadata — its risk level, regulatory reference, and tags. The AI uses this context to generate tests that are specific to the regulatory environment.

Example: Consider a requirement titled “Audit Trail Event Logging” with the description “The system shall record all create, modify, and delete operations on GxP-critical records with timestamp, user identification, and before/after values” and a regulatory reference of “21 CFR 11.10(e).”

The AI generates tests like:

- Verify Create Operation Logging (Confidence: 95%) — Create a new GxP record. Verify the audit trail captures: timestamp, user ID, action type (create), and full record content. Confirm timestamp is from an NTP-synchronized source per 21 CFR 11.10(e).

- Verify Modification Logging with Before/After Values (Confidence: 93%) — Modify a field in an existing record. Verify the audit trail captures both the previous value and new value. Confirm the entry includes user identification and timestamp.

- Verify Delete Operation Logging (Confidence: 91%) — Attempt to delete a GxP record. Verify the audit trail records the deletion event with the full content of the deleted record, user ID, and reason for deletion.

- Verify Audit Trail Immutability (Confidence: 88%) — Attempt to modify or delete an existing audit trail entry. Verify the system prevents any alteration to historical audit records per 21 CFR 11.10(e) requirements.

- Verify Concurrent User Audit Trail Integrity (Confidence: 85%) — Have two users perform simultaneous modifications to different records. Verify each action is logged independently with correct user attribution and sequential timestamps.

Each test case comes with a confidence score. The quality professional can accept individual tests, edit them before accepting, or reject them entirely. Bulk actions allow accepting all tests above a confidence threshold (for example, accept all tests with 90%+ confidence) or accepting everything at once.

The critical design decision here is that accepted tests are created as real test entities in the system, linked to the originating requirement. They are not suggestions floating in a sidebar — they become part of the project’s traceability matrix.

2. Risk Classification with Taxonomy-Specific Prompts

Risk assessment in regulated industries is not one-size-fits-all. Medical device companies use ISO 14971, which focuses on severity and probability of harm to patients. Pharmaceutical companies use ICH Q9, which takes a broader view of quality risk including product quality, regulatory compliance, and business continuity. Software validation projects use GAMP 5’s risk-based approach, classifying systems by their impact on product quality and patient safety. Aerospace uses FMEA with detectability as a third dimension.

QAtrial’s AI risk classification is aware of these differences. When you classify a requirement’s risk, the prompt includes the project’s vertical and the associated risk taxonomy. The AI returns:

- Severity score (1-5, from Negligible to Critical)

- Likelihood score (1-5, from Rare to Almost Certain)

- Computed risk score (severity multiplied by likelihood, out of 25)

- Risk level (Low, Medium, High, or Critical, based on defined thresholds)

- Safety class (when applicable — for example, FDA Class I/II/III for medical devices, DAL A-E for aerospace)

- Reasoning — a written explanation of why the AI assigned these scores, referencing the applicable taxonomy

The reasoning is the most valuable part. It forces the quality professional to engage with the logic, not just the numbers. If the AI classified a requirement as high severity because it relates to patient data integrity, and the quality professional disagrees because the data in question is non-clinical, they can reject the classification and assign their own scores with documented rationale.

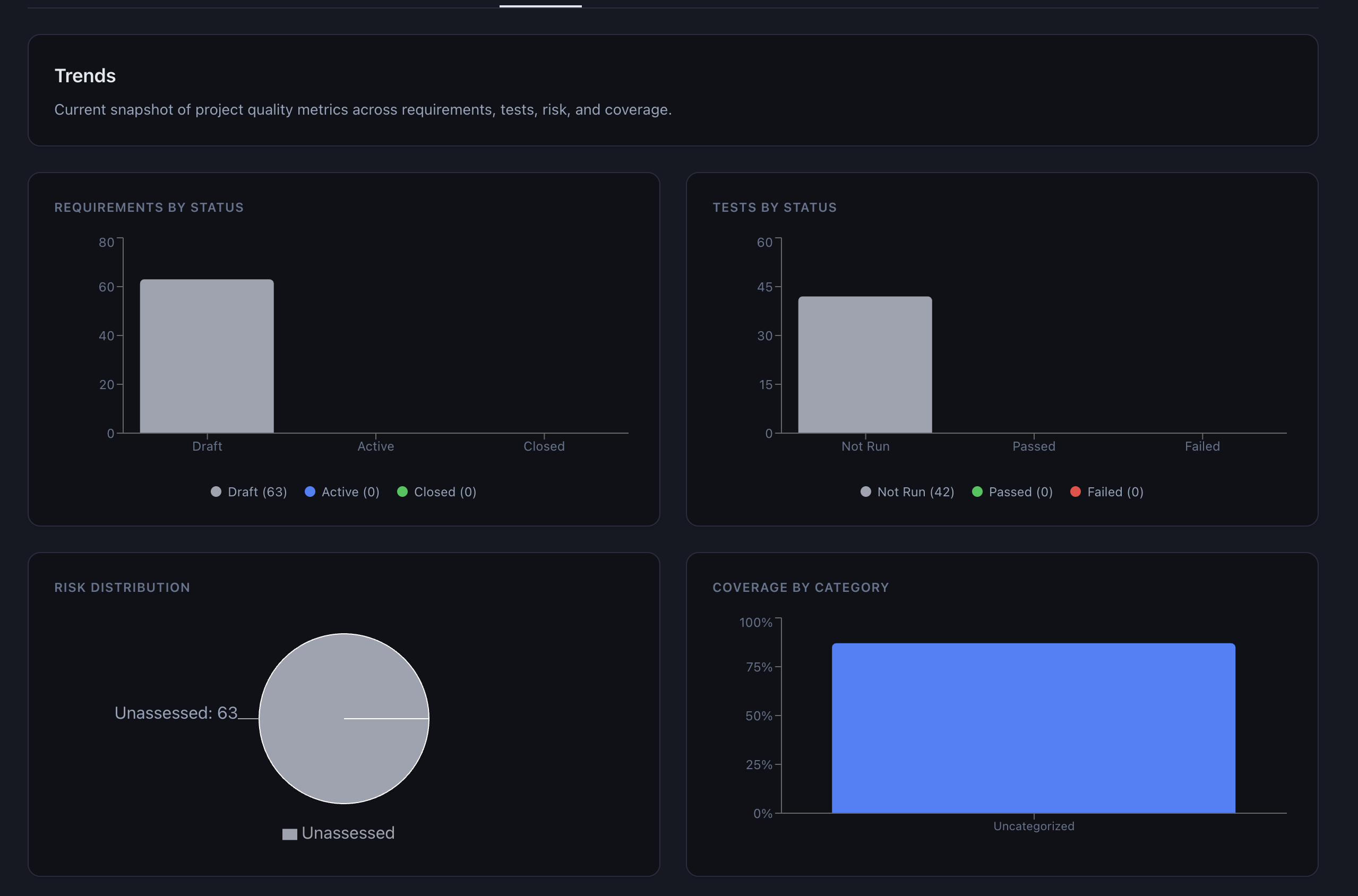

Risk classifications feed directly into the interactive 5×5 risk matrix on the dashboard. A “Classify All Unassessed” button on the Risk tab sends every unassessed requirement to the AI in sequence, which is useful for initial project setup when dozens of requirements need risk scores.

3. Regulatory Gap Analysis

Gap analysis is where the AI’s regulatory knowledge is most directly tested. The system collects the regulatory standards referenced in your project’s requirements (for example, 21 CFR Part 11, EU Annex 11, GAMP 5) and asks the AI to evaluate your requirements and tests against the clauses of those standards.

The results are structured per standard:

- Total clauses analyzed

- Covered: Your project has requirements and tests that address this clause

- Partial: Some aspects of the clause are covered, but gaps exist

- Missing: No requirements or tests address this clause

An overall readiness percentage is calculated (covered counts as 1.0, partial as 0.5, missing as 0.0). Critical gaps are listed with AI-generated suggestions for remediation.

The most powerful feature is the Generate Requirement button on each gap. If the AI identifies that your project is missing coverage for EU Annex 11 Section 7.1 (data storage and backup), you can click a button to create a requirement that addresses that gap directly. This closes the loop between analysis and action.

4. CAPA Suggestions from Failed Tests

When a test fails, the natural next question is: what do we do about it? In regulated industries, the answer is a Corrective and Preventive Action (CAPA) — a structured investigation that identifies the root cause, implements a fix, and prevents recurrence.

QAtrial’s AI CAPA suggestion takes a failed test and its linked requirement as context and generates:

- Root Cause Analysis: What likely caused the failure, based on the test description and expected vs. actual outcome

- Containment Action: What to do immediately to limit the impact

- Corrective Action: What to change to fix the specific problem

- Preventive Action: What systemic change to make to prevent similar failures

- Effectiveness Check Criteria: How to verify that the corrective and preventive actions actually worked

This is explicitly a starting point, not a finished CAPA. Root cause analysis in particular requires investigation of the actual system, process, and evidence — something an AI cannot do. But having a structured draft to work from saves significant time and ensures the CAPA follows the standard five-part structure that auditors expect.

5. Executive Compliance Brief

Quality managers often need to report compliance status to executives who do not want to read a traceability matrix. The executive brief generates a one-page summary with key metrics (requirement coverage, test pass rate, risk distribution, compliance readiness score), highlights (what is going well), concerns (what needs attention), and recommended next actions.

The brief is AI-generated from actual project data, not boilerplate. If your project has 85% requirement coverage but three critical-risk requirements with no linked tests, the brief will flag that specific gap.

6. Validation Summary Report (VSR)

The VSR is the most complex AI-generated artifact. It is a seven-section, audit-ready report that covers:

- Executive Summary

- Scope and Objectives

- Validation Methodology

- System Description

- Results and Findings (with traceability data)

- Deviations and CAPA

- Conclusion and Recommendation

Each section combines structured data from the project (requirement counts, test results, risk assessments) with AI-generated narrative that contextualizes the data for the intended audience — typically auditors or quality management reviewers.

Sections are labeled as either “AI-generated” or “data-assembled,” and each can be individually approved with an electronic signature before the report is finalized.

AI Tax Law, Compliance & Filing Rules: How Algorithms Stay Up-to-Date with IRS Regulations (AI Personal Taxes)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Multi-Provider Architecture

QAtrial does not lock you into a single AI vendor. The system supports two provider types:

- Anthropic: Native integration with Claude via the Anthropic Messages API

- OpenAI-compatible: Any API that follows the OpenAI Chat Completions format — this includes OpenAI’s own GPT-4, OpenRouter (which provides access to dozens of models), and local Ollama instances

You can configure multiple providers simultaneously. Each provider is assigned to one or more purposes: Test Generation, Gap Analysis, Risk Classification, Report Narrative, Requirement Decomposition, CAPA, or the catch-all “All.”

Purpose-Scoped Routing

The routing algorithm is straightforward:

- For a given AI task, find all enabled providers whose purpose list includes that specific task

- Sort by priority (lower number = higher priority)

- Use the highest-priority match

- If no specific match exists, fall back to providers configured for “All”

This allows sophisticated configurations. For example:

- Claude Sonnet for report generation (where writing quality matters most), priority 1

- GPT-4o for risk classification and gap analysis (where structured reasoning is key), priority 1

- A local Llama 3.1 via Ollama for test generation during development (where speed matters and data sensitivity is high), priority 1

The Settings page shows a purpose routing table that makes it clear which provider handles each task.

Prompt Engineering for Regulatory Precision

All six prompt templates follow a consistent architecture: a context interface defines required inputs, a builder function constructs the full prompt string with regulatory context, and a main function calls the AI and parses the structured response.

Temperature is set low (0.3 by default) to favor deterministic, precise outputs over creative variation. Structured outputs are requested as JSON with explicit schemas, and the parser handles markdown code fences that some models wrap around JSON responses.

Every AI response includes the provider ID and model name, creating a chain of traceability from the AI suggestion back to the specific model that generated it. This is important for audit purposes — if a regulator asks which AI system was used to generate a risk classification, the answer is recorded in the data.

GxP validation test case generator

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Human-in-the-Loop Principle

No AI-generated content in QAtrial is automatically applied. Every test case must be accepted or rejected. Every risk classification must be approved or overridden. Every gap analysis suggestion must be reviewed. Every CAPA is a proposal, not an action.

This is not just a design philosophy — it is a regulatory requirement. FDA, EMA, and other authorities expect that qualified personnel make quality decisions. AI can accelerate the work, but the accountability remains with the human.

The confidence scoring system reinforces this. Scores above 90% (green) indicate high AI confidence. Scores between 70-89% (yellow) warrant careful review. Scores below 70% (red) strongly suggest manual intervention. The “Accept High Confidence” bulk action deliberately sets the threshold at 90%, encouraging review of everything below that line.

Heritage Eagle Design Compliance Marking Self-Inking Tool – Stamp + Inspection for Qa Inspectors, Regulatory Managers Manufacturing

Speed: Stamp compliance marks in seconds with the Easy Press and Print self-inking mechanism—no separate ink pad needed,…

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

The Privacy Advantage

For organizations with strict data governance requirements — and in regulated industries, that is most of them — the ability to run AI entirely locally is not optional. QAtrial supports Ollama, which means you can run a capable open-source model like Llama 3.1 on your own hardware. No API keys, no data leaving your network, no third-party data processing agreements to negotiate.

The trade-off is capability. Frontier models like Claude and GPT-4 generally produce better results than local models, particularly for complex tasks like gap analysis and report generation. But for organizations where data sovereignty is non-negotiable, having the option to run locally — even with somewhat lower quality outputs — is the difference between using AI and not using it at all.

Pharmaceutical Computer Systems Validation: Quality Assurance, Risk Management and Regulatory Compliance (Drugs and the Pharmaceutical Sciences)

As an affiliate, we earn on qualifying purchases.

As an affiliate, we earn on qualifying purchases.

What This Means for Quality Professionals

The compliance co-pilot does not eliminate the need for domain expertise. It eliminates the drudgery that prevents domain experts from applying their expertise where it matters most.

A validation specialist who spends four hours writing test cases for 20 requirements can now generate first drafts in minutes and spend those four hours reviewing, refining, and thinking critically about edge cases the AI might have missed. A risk manager who spends a day classifying 50 requirements can get AI proposals in minutes and focus their time on the borderline cases where human judgment is essential.

The tool is open source, the AI integration is provider-agnostic, and the human stays in the loop. That is the model for responsible AI in regulated industries.

QAtrial is available at github.com/MeyerThorsten/QAtrial.