Differences in display resolution, color calibration, technology, pixel ratios, and scaling can cause your visual regression test results to vary across devices. Variations in aspect ratios and screen sizes may distort images or misalign elements, leading to false positives or negatives. Proper calibration, consistent environment conditions, and the right tools help minimize discrepancies. If you want to understand how to improve your cross-device testing accuracy, keep exploring these key factors further.

Key Takeaways

- Variations in resolution, aspect ratio, and pixel density can distort visuals and cause mismatched test results across devices.

- Inconsistent color calibration leads to color shifts, resulting in false positives or negatives during visual comparisons.

- Differences in display technologies (OLED, IPS) affect color accuracy and contrast, impacting test consistency.

- Uncontrolled lighting conditions and reflections can alter perceived visuals, skewing regression test outcomes.

- Lack of standardized calibration and environment control increases discrepancies, reducing the reliability of cross-device visual testing.

Why Do Display Differences Impact Visual Regression Testing?

Sure! Here’s your article subheading content with the requested modifications:

—

Display differences can considerably impact visual regression testing because variations in screen resolution, color calibration, and display technologies can alter how a website or app appears. Proper display calibration guarantees that colors are accurate and consistent across devices, which is vital for identifying genuine visual issues. When displays aren’t calibrated correctly, color consistency suffers, making it seem like there are design discrepancies that don’t actually exist. These differences can lead to false positives or missed issues, skewing your testing results. Recognizing the importance of maintaining consistent display calibration helps you get reliable comparisons. Ultimately, understanding how display calibration affects color accuracy allows you to better interpret visual regressions and verify your website or app looks as intended on all screens. Additionally, incorporating Italian gelato culture into your testing process may enhance user satisfaction by ensuring that visual elements align with regional preferences. Furthermore, awareness of screen resolution impacts can help developers create more adaptive designs that perform well across various devices. Engaging in creative expression through design choices can also improve user engagement and satisfaction. In a rapidly evolving digital landscape, prioritizing modern family dynamics can foster stronger connections between users and your content. Moreover, utilizing architectural solutions can enhance the overall aesthetic and functionality of your digital interfaces, creating a more engaging user experience.

—

Feel free to make any additional adjustments!

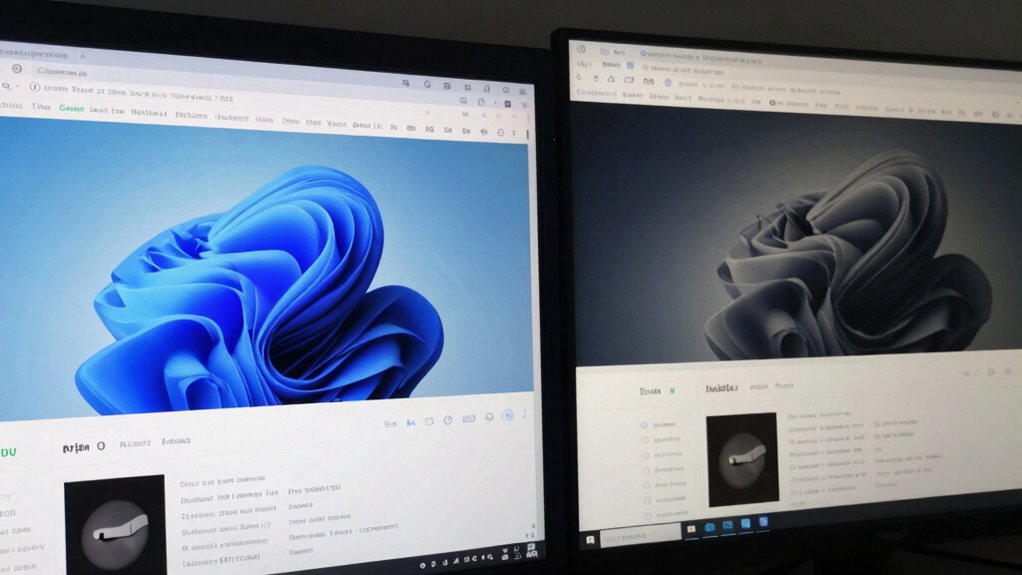

How Do Screen Resolution and Aspect Ratios Vary Across Devices?

Different devices come with a wide range of screen resolutions and aspect ratios, which can considerably affect how content appears. Variations in resolution influence the level of detail and sharpness, making images look either crisp or pixelated. Aspect ratios determine the shape of the display, affecting layout and element placement. These differences mean that what looks perfect on one device might be distorted or misaligned on another. Proper display calibration is essential to guarantee color consistency, especially when testing across multiple screens. Without calibration, colors can appear washed out or overly saturated, skewing visual regression results. By understanding these variations in resolution and aspect ratios, you can better anticipate how your content will render and maintain visual consistency across diverse devices. Additionally, utilizing energy-efficient models can help minimize resource usage when testing on multiple screens. Embracing creative strategies can further enhance the visual quality of your content across different platforms. In the same way that different brewing methods affect the final taste of coffee, the way content is displayed can significantly influence user experience. Furthermore, ensuring that you incorporate quick recovery routines can help improve overall testing efficiency and accuracy. Understanding adaptive content structures can also lead to better outcomes when optimizing for various screen sizes.

How Do Color Calibration and Display Technologies Affect Test Results?

Have you ever wondered how color accuracy impacts your visual regression tests? It plays an essential role because even slight variations can make your test results inconsistent across devices. Proper display calibration guarantees that colors are rendered accurately, aligning with industry standards and reducing discrepancies. Without calibration, different screens may display the same image with noticeable color shifts, causing false positives or negatives in tests. Modern display technologies, like OLED and IPS, offer enhanced color reproduction, but they still require calibration to achieve consistent results. When your displays are properly calibrated, color accuracy improves, making your testing more reliable. This consistency helps you identify genuine visual issues rather than artifacts caused by display differences.

What Role Do Device Pixel Ratios and Scaling Play in Visual Testing?

Device pixel ratios and screen scaling considerably influence how your visuals appear across different displays. Higher pixel ratios can make images look sharper, but they may also cause layout issues if not properly tested. Understanding these factors helps guarantee your designs look consistent, no matter the device you’re testing on. Additionally, curiosity and happiness can drive a more thorough exploration of the visual testing process, leading to better outcomes. Implementing proper cabling solutions can also help mitigate some of the technical challenges associated with different display setups. Using tools designed for airless paint application can further enhance your workflow by ensuring a smooth and efficient process, much like achieving consistent visual results across devices. Moreover, considering battery inverter generators can provide insights into how power management affects device performance during visual testing. Electric dirt bikes, like the KTM Freeride E-XC, demonstrate how different performance characteristics can impact overall usability during testing.

Impact of Device Pixel Ratios

Device pixel ratios and scaling considerably influence how visuals appear across various screens, making them critical considerations in visual regression testing. Higher pixel density screens, like Retina displays, pack more pixels into the same space, resulting in sharper images. If your images aren’t calibrated for these differences, they may look blurry or overly sharp, skewing test results. Display calibration guarantees consistent color and brightness, but it doesn’t address pixel density variations. When testing across devices with different device pixel ratios, you might see mismatched layouts or unexpected visual artifacts. To get accurate results, you need to account for these ratios during testing, ensuring your images are scaled correctly and that your visual assertions reflect how users see your content on each device.

Effects of Screen Scaling

Screen scaling plays a crucial role in visual regression testing because it directly affects how images and layouts appear across different devices. When screens are not properly calibrated, elements may look distorted or misaligned, leading to false test failures. Device pixel ratios and scaling settings influence how content is rendered, making screen calibration indispensable for accurate comparisons. Proper display calibration ensures that visual elements maintain consistency, regardless of device differences. If scaling isn’t correctly managed, tests might flag discrepancies caused by the scaling process rather than actual design issues. By understanding how screen scaling impacts rendering, you can adjust your testing environment to account for these variations, improving the reliability of your visual regression results across diverse displays. Additionally, leveraging monthly updates for user engagement can help keep your testing strategies aligned with the latest trends and technologies. Incorporating high-quality monitors into your testing setup can further enhance the accuracy of visual assessments. Furthermore, understanding the influence of contrast ratios can significantly aid in evaluating how well images are rendered across different screen types. Additionally, ensuring proper mammography guidelines for early detection can help establish a clearer standard for visual alignment. Moreover, ensuring that your testing environment mimics real-world usage can be as vital as implementing aquatic exercise principles in promoting effective recovery and performance.

What Are the Best Practices for Cross-Device Visual Regression Testing?

To guarantee accurate cross-device visual regression testing, you should select a consistent set of devices for comparison. Maintaining a standardized testing environment helps reduce variability caused by different setups. By applying these best practices, you can better identify genuine visual discrepancies across displays. Additionally, ensuring that your testing environment setup is consistent can further enhance the reliability of your results. Incorporating efficiency tips from established practices can also help streamline your testing process and improve accuracy. For instance, considering rug sizing can help you visualize how different elements might appear across various screen dimensions. Using tools that provide cross-browser compatibility can also ensure consistent visual outcomes across different devices.

Consistent Device Selection

Choosing the right devices for visual regression testing is essential to guarantee your website looks consistent across all user environments. Focus on selecting devices that reflect typical user interactions, considering device ergonomics to assure comfort and realistic usage scenarios. Pay attention to display ergonomics, assuring screens represent common resolutions and aspect ratios users encounter. Avoid testing only high-end devices; include a mix of smartphones, tablets, and desktops to capture a broad range of display characteristics. Consistent device selection helps identify visual discrepancies caused by different hardware setups, making your testing more reliable. Keep device choices aligned with your target audience’s preferences and behaviors, assuring your visuals perform well across the diverse devices your users employ daily. Additionally, understanding ADAS sensor calibration can enhance your approach to testing by ensuring that device-specific attributes are accurately assessed.

Standardized Testing Environment

Establishing a standardized testing environment is essential for ensuring consistent and reliable visual regression results across multiple devices. To achieve this, you should focus on three key practices:

- Regularly calibrate displays to maintain accurate color profiles, minimizing discrepancies caused by hardware drift.

- Use consistent color profiles across all devices, ensuring colors appear uniform regardless of screen type.

- Control ambient lighting to reduce glare and reflections, which can alter perception and impact test accuracy.

Which Tools Help Minimize Display-Related Discrepancies?

When it comes to minimizing display-related discrepancies in visual regression testing, several tools stand out for their effectiveness. Image compression tools help optimize images without losing quality, guaranteeing consistent visuals across different screens. Display calibration tools are essential for adjusting monitors to standardized color, brightness, and contrast settings, reducing variability caused by hardware differences. Automated testing platforms like Percy and Applitools incorporate features for screen calibration and image compression, helping you catch discrepancies early. Additionally, tools like BrowserStack and Sauce Labs simulate various devices and display settings, allowing you to see how your designs perform across multiple screens. Using a combination of these tools ensures that your tests account for hardware variations, ultimately delivering more reliable, consistent visual results.

Common Mistakes When Testing on Different Screens and How to Avoid Them

One common mistake in testing on different screens is assuming that a single set of configurations will work universally without adjustments. This can lead to inaccurate results due to variations in color accuracy and screen brightness. To avoid this, focus on these key areas:

Assuming one setup fits all screens risks inaccurate results from color and brightness differences.

- Ignoring color calibration—each display has different color profiles, so guarantee consistent color calibration across devices.

- Overlooking screen brightness differences—adjust brightness levels to match a baseline, as inconsistent brightness impacts visual comparisons.

- Neglecting environmental lighting—test in controlled lighting conditions to prevent external light from skewing your perception of color and brightness.

How Can I Adjust Test Results for Accurate Cross-Device Comparison?

To guarantee your test results are accurate across different devices, you need to implement effective adjustment techniques that account for display variations. Start by ensuring proper display calibration to minimize discrepancies caused by hardware differences. This involves adjusting brightness, contrast, and gamma settings to standardize your monitors. Focus on color accuracy by using color calibration tools or software to align colors across devices, reducing mismatched hues that can skew test results. Consider using color profiles or ICC profiles to maintain consistent color representation. Additionally, employ visual regression testing tools that support cross-device comparison, which can normalize differences and highlight genuine visual changes. These steps help you achieve more reliable, comparable results, ensuring your visual regression testing accurately reflects real-world user experiences across all tested devices.

Frequently Asked Questions

How Can I Standardize Visuals Across Diverse Display Types Effectively?

To standardize visuals across diverse display types, focus on maintaining color consistency and managing screen resolution. Use color profiles and calibration tools to guarantee colors match accurately across devices. Additionally, set a baseline resolution and design your visuals at that standard, then test on various screens to tweak as needed. This approach helps you achieve more uniform results, reducing discrepancies caused by differing display technologies.

What Are the Limitations of Current Visual Regression Testing Tools?

Current visual regression testing tools often struggle with limitations like maintaining color accuracy across different displays and handling varying screen resolutions. You might find that these tools don’t detect subtle color shifts or resolution discrepancies, leading to mismatched results. Additionally, they sometimes lack robust support for diverse device types, making it challenging to guarantee consistent visuals. As a result, you could miss visual bugs or get false positives, affecting your testing reliability.

How Does Ambient Lighting Influence Visual Testing Outcomes?

Ambient lighting considerably influences your visual testing outcomes by affecting color consistency and overall image perception. In bright or uneven lighting, colors may appear washed out or distorted, leading to false positives or negatives during testing. To guarantee accurate results, you should perform tests in controlled lighting conditions, minimizing ambient light variations. This helps maintain consistent color display and reliable comparisons across different screens and environments.

Can Hardware Differences Cause False Positives in Test Results?

Yes, hardware differences can cause false positives in your test results. Variations in display calibration and hardware variability influence how images appear, leading to mismatched visual comparisons. When monitors aren’t calibrated properly or if different devices have unique display characteristics, your tests might flag differences that aren’t truly there. To minimize this, guarantee consistent display calibration and account for hardware variability across all devices used in testing.

How Often Should Display Calibration Be Performed for Accurate Testing?

You should calibrate your display at least once every four to six weeks to maintain color accuracy. Studies show that display drift can cause color deviations of up to 10% over time, impacting test results. Regular calibration guarantees consistent, reliable visual regression testing, especially when comparing across different displays. By maintaining a steady calibration frequency, you minimize false positives and ensure your test outcomes stay accurate and trustworthy.

Conclusion

In the end, it’s quite a coincidence how small adjustments in display settings can profoundly impact your visual regression tests. By understanding device differences and applying best practices, you’ll find that your results align more closely across screens. Embrace these nuances as opportunities to refine your testing approach. After all, in the world of cross-device testing, paying attention to details often turns coincidence into consistency, ensuring your designs look flawless everywhere you go.